The Backstory

Vinext is a Vite plugin that reimplements the public Next.js API surface. Routing, server rendering, next/* module imports, the CLI. It replaces the internals so existing Next.js applications can run on a different toolchain, with Cloudflare Workers as the primary deploy target.

Cloudflare's blog post describes the build: one engineer (technically engineering manager) planned the architecture and iterated through AI sessions. The first commit landed on February 13, 2026. By evening, Pages Router and App Router basic SSR were working, along with middleware, server actions, and streaming. By the end of the week, the project had 1,700+ Vitest tests and 380 Playwright E2E tests ported from Next.js's own test suite.

Cloudflare's own disclaimer: "We want to be clear: vinext is experimental. It's not even one week old, and it has not yet been battle-tested with any meaningful traffic at scale."

Two days after launch, Guillermo Rauch (Vercel CEO) posted publicly: "We've identified, responsibly disclosed, and confirmed 2 critical, 2 high, 2 medium, 1 low security vulnerabilities in Cloudflare's vibe-coded framework Vinext." Vercel simultaneously published a "Migrate to Vercel from Cloudflare" guide.

Then Hacktron, an autonomous offensive security platform, ran a full independent audit. They found 45 vulnerabilities, manually validated 24 after removing false positives and low-severity findings. Four critical: race conditions enabling session hijacking via AsyncLocalStorage state pollution, cache poisoning through missing auth headers in cache keys, middleware bypass via double-encoded payloads, and API routes silently excluded from middleware. Eight high-severity: including SSRF, ACL bypass, path traversal, and routing bypass.

From Hacktron's write-up: "The model's objective is not 'be secure.' It is 'pass the tests,' and it will take whatever route gets it there."

Security researcher Sam Curry noted that one of the path parsing vulnerabilities matched a bug he had reported in Next.js two years earlier. The AI appeared to have reproduced a known vulnerability pattern from the original framework.

The Two Projects

Next.js is maintained by Vercel and a large contributor base. TypeScript and JavaScript, with a Rust-based Turbopack build layer. Years of development, thousands of PRs, extensive production exposure across millions of sites.

Vinext was built by one person directing AI through 800+ sessions over less than a week. TypeScript. Its test-to-code ratio is more than double Next.js's, but the raw size difference is 15x.

Both projects were run through the same Octokraft pipeline: language-appropriate static analyzers, tree-sitter extraction into a PostgreSQL-backed knowledge graph, automated convention detection, and LLM-powered behavioral analysis. Same rubric. Same weights.

| Metric | Next.js | Vinext |

|---|---|---|

| Overall Score | 87.8 (A-) | 80.0 (B+) |

| Total LOC | 1,832,916 | 122,393 |

| Test LOC | 509,843 | 75,910 |

| Test/Code Ratio | 0.278 | 0.620 |

| Total Issues | 2,065 | 203 |

| Critical Issues | 2 | 2 |

| High Issues | 8 | 7 |

| Primary Language | JavaScript | TypeScript |

| PR Count | 1,794 | 40 |

Overall Scores: 87.8 vs 80.0

Next.js scores 87.8 (A-). Vinext scores 80.0 (B+). A 7.8-point gap. In the 24-project benchmark, Next.js ranked #6 overall and Vinext ranked #13.

The gap concentrates in three categories: test coverage (-26.2 points), code smells (-22.5 points), and consistency (-15.8 points). Vinext scores higher in two categories and ties in two others.

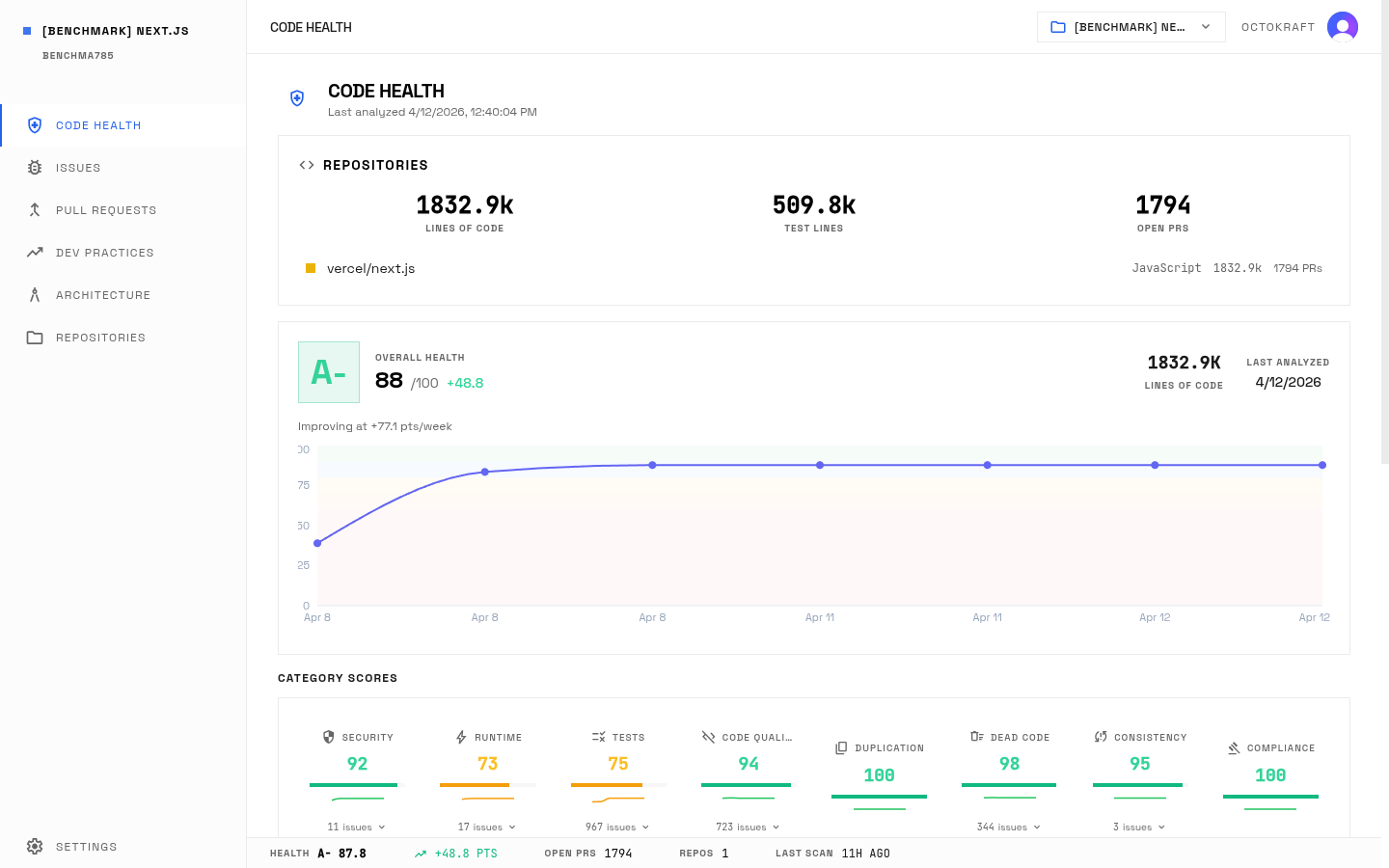

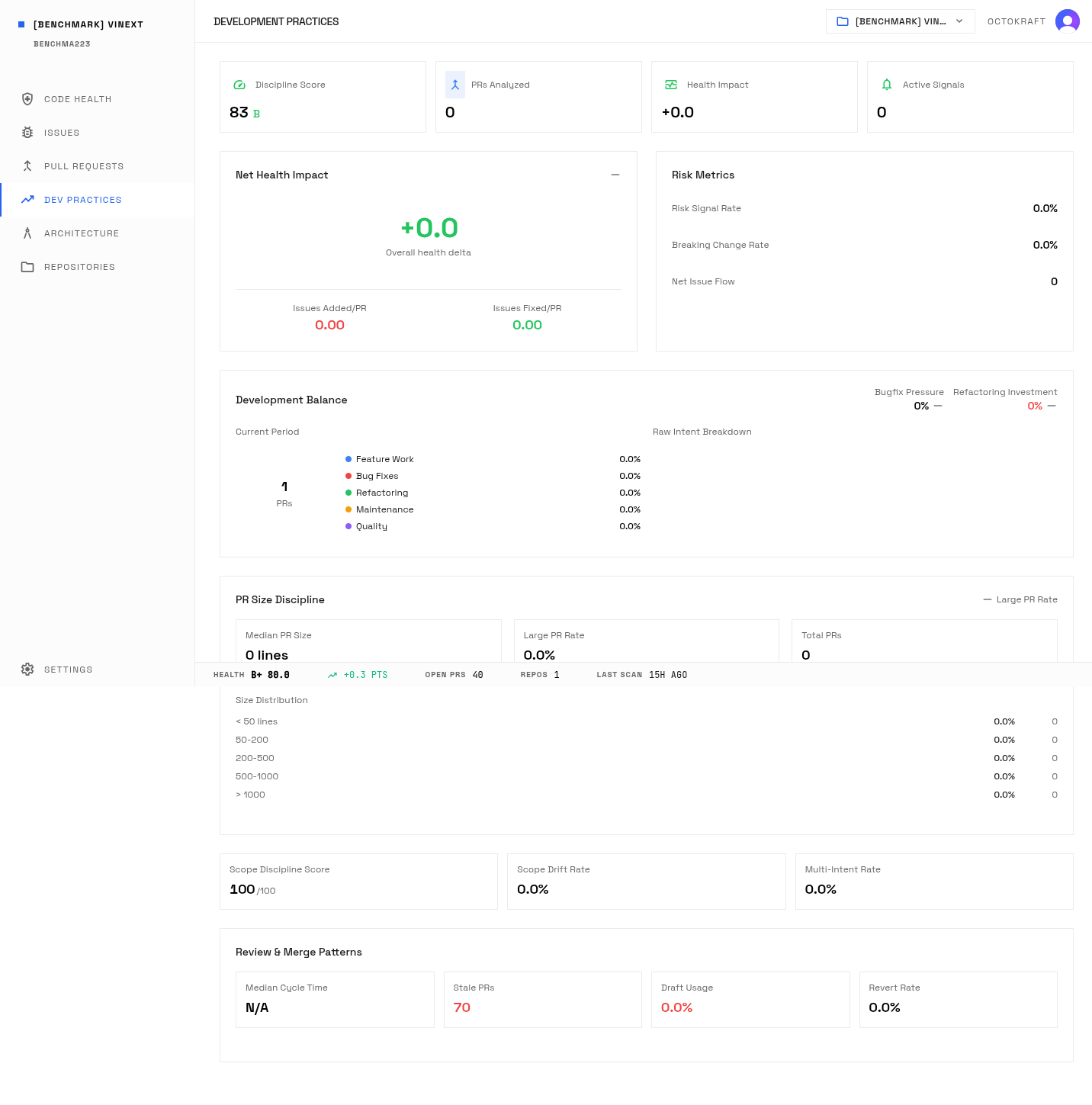

Next.js health dashboard: 87.8 overall (A-) across 1.83M lines.

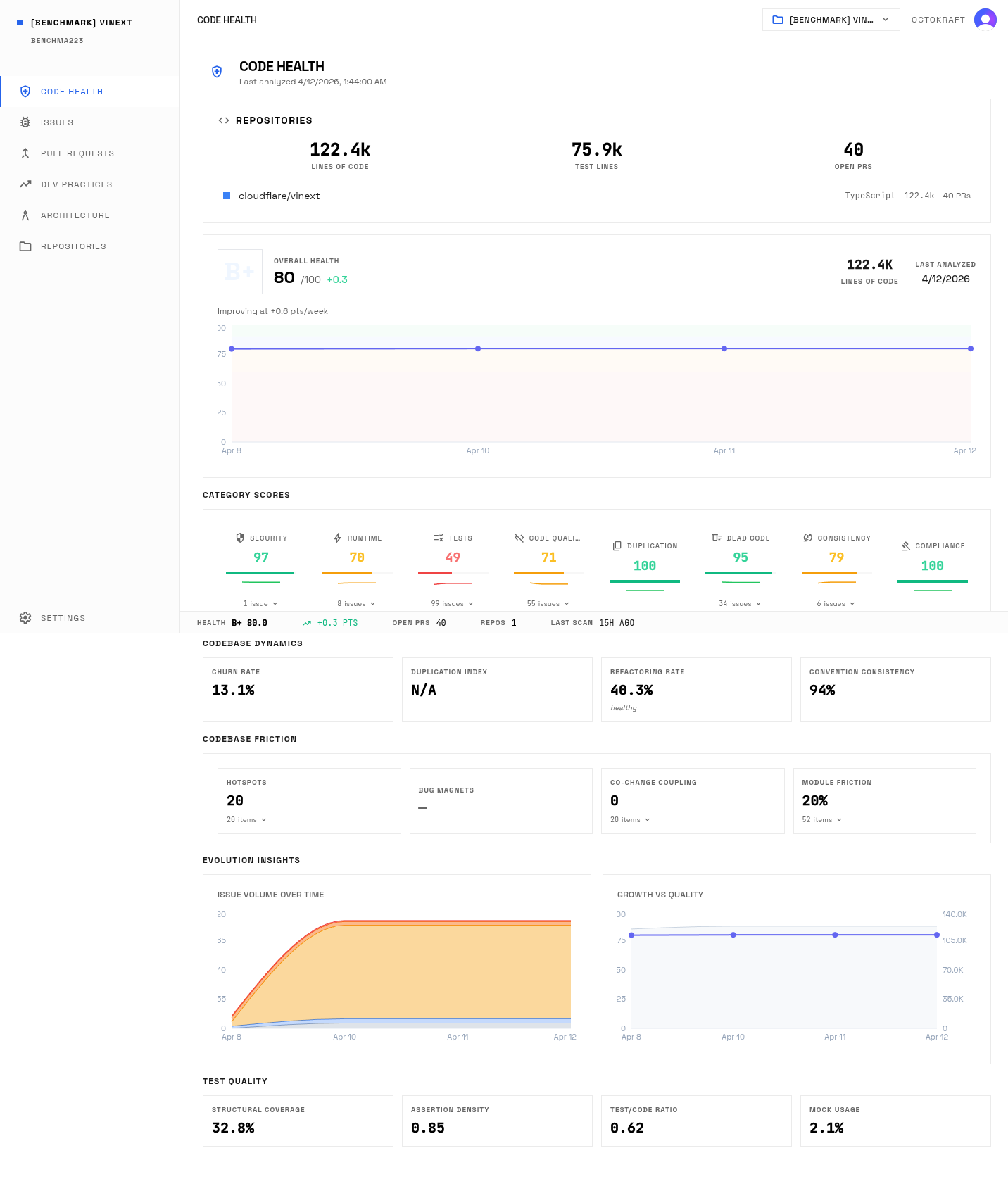

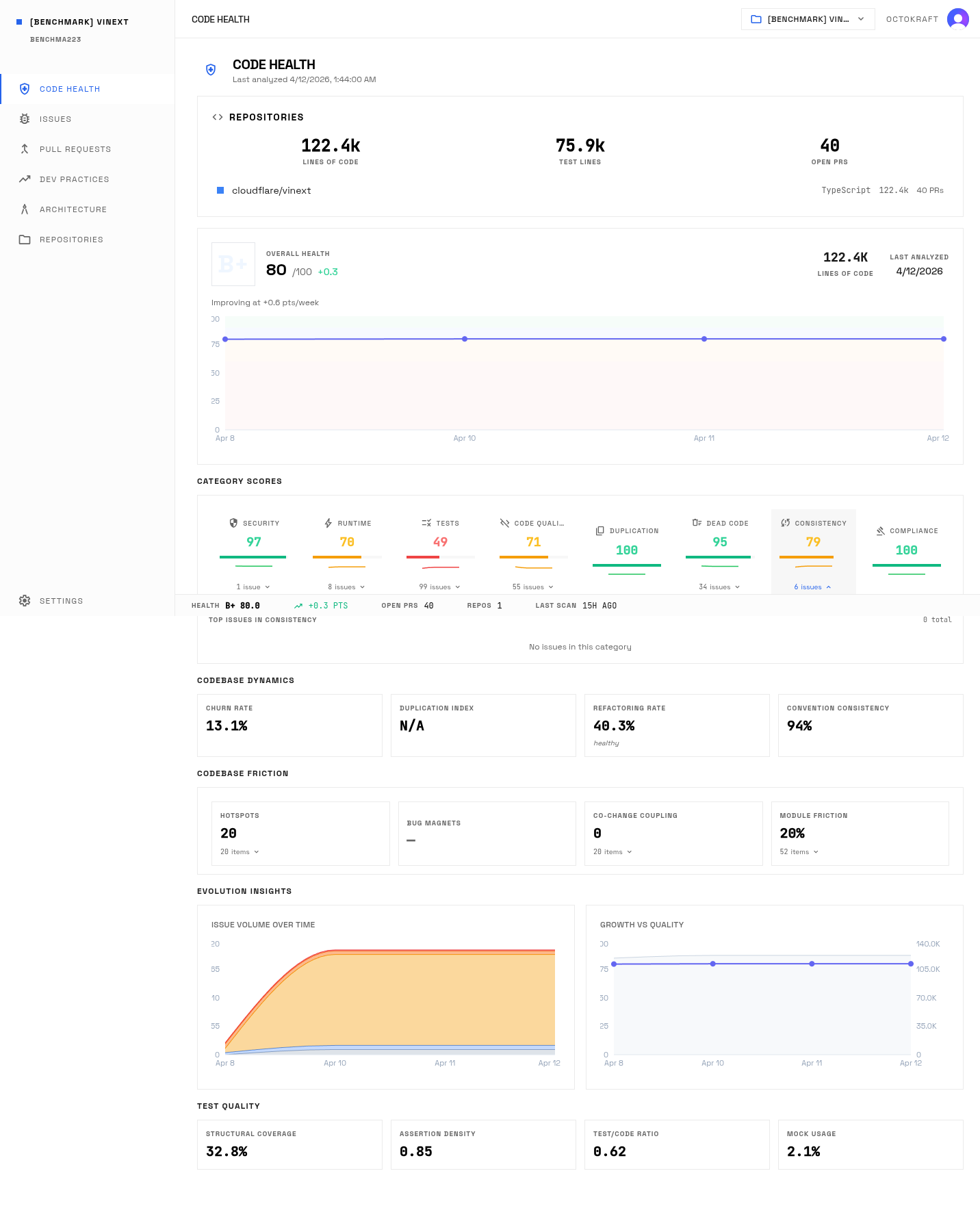

Vinext health dashboard: 80.0 overall (B+) across 122K lines.

Category by Category

| Category | Next.js | Vinext | Delta |

|---|---|---|---|

| Security | 91.7 | 96.9 | +5.2 Vinext |

| Runtime Risks | 72.5 | 70.3 | -2.2 Next.js |

| Test Coverage | 74.8 | 48.6 | -26.2 Next.js |

| Code Smells | 93.6 | 71.1 | -22.5 Next.js |

| Duplication | 100.0 | 100.0 | Tie |

| Dead Code | 98.2 | 94.7 | -3.5 Next.js |

| Consistency | 94.7 | 78.9 | -15.8 Next.js |

| Compliance | 100.0 | 100.0 | Tie |

Vinext's per-category breakdown: high security (96.9) and compliance (100) mask weaknesses in test coverage (48.6), code smells (71.1), and consistency (78.9).

Security: 96.9 vs 91.7

Vinext scores 96.9 on security. Next.js scores 91.7. Vinext has 1 security issue (a medium-severity finding where rewrite destination parameters could enable SSRF). Next.js has 11 security issues, including an SSRF vulnerability via Host header manipulation (high), CSRF protection bypass via null Origin header (medium), and path traversal in page bundle request handling (medium).

On the static analysis rubric, Vinext is the cleaner codebase. The AI did not introduce known-bad patterns: no SQL injection surfaces, no hardcoded secrets, no open path traversals in the code as written. But 31+ security vulnerabilities were found by external researchers that the static analysis pipeline did not catch. They live in how modules interact at runtime, not in any single code pattern. More on this below.

Test Coverage: 74.8 vs 48.6

The largest gap. Vinext has a 0.620 test-to-code ratio, meaning more lines of test code per line of production code than Next.js (0.278). The AI generated extensive tests. But the graph-based analysis identified 99 testing issues in Vinext: untested core functions, weak assertion patterns, and skipped production test suites.

Three high-severity testing findings in Vinext: the core handleClick navigation function has no unit tests (only SSR rendering is tested), a potential double-prefixing bug passes its test because the test contains the same error, and all ISR production tests are skipped. Next.js has 967 testing issues, mostly graph-rule findings across its 1.83M lines, but it has measurable structural coverage (12.6%) and an assertion density of 0.084 per test function.

The architecture analysis flagged specific gaps in Vinext: RSC/SSR environment state isolation not tested, missing tests for middleware execution order, insufficient testing of error boundary resolution order, and missing tests for Cloudflare Workers-specific error paths. Those gaps map to the exact locations where the external security vulnerabilities were later found.

Runtime Risks: 72.5 vs 70.3

A 2.2-point gap, the smallest of any category. Next.js has 17 runtime issues across 1.83M lines. Vinext has 8 in 122K lines.

Two of Vinext's are high-severity: getRequestContext() silently falls back to a detached context when AsyncLocalStorage is not available, and route handler execution has no timeout. The remaining six are medium-severity: unbounded ISR pending regeneration maps, unbounded KV tag cache growth, ISR cache memory growth without bounds, and three instances of silent error swallowing in critical paths.

Next.js's runtime issues include one critical finding (unbounded graph traversal during invalidation) and a high-severity memory exhaustion bug under cascade invalidation. Both sit in a codebase that has been load-tested in production across millions of deployments. Vinext's unbounded caches and missing timeouts would cause resource exhaustion under sustained traffic. The gap is smaller, but the nature of the risk differs: Next.js's issues are documented. Vinext's have not been tested at scale.

Code Smells: 93.6 vs 71.1

A 22.5-point gap, the second largest. The analysis surfaced 55 code smell issues in Vinext, including 39 static analyzer findings.

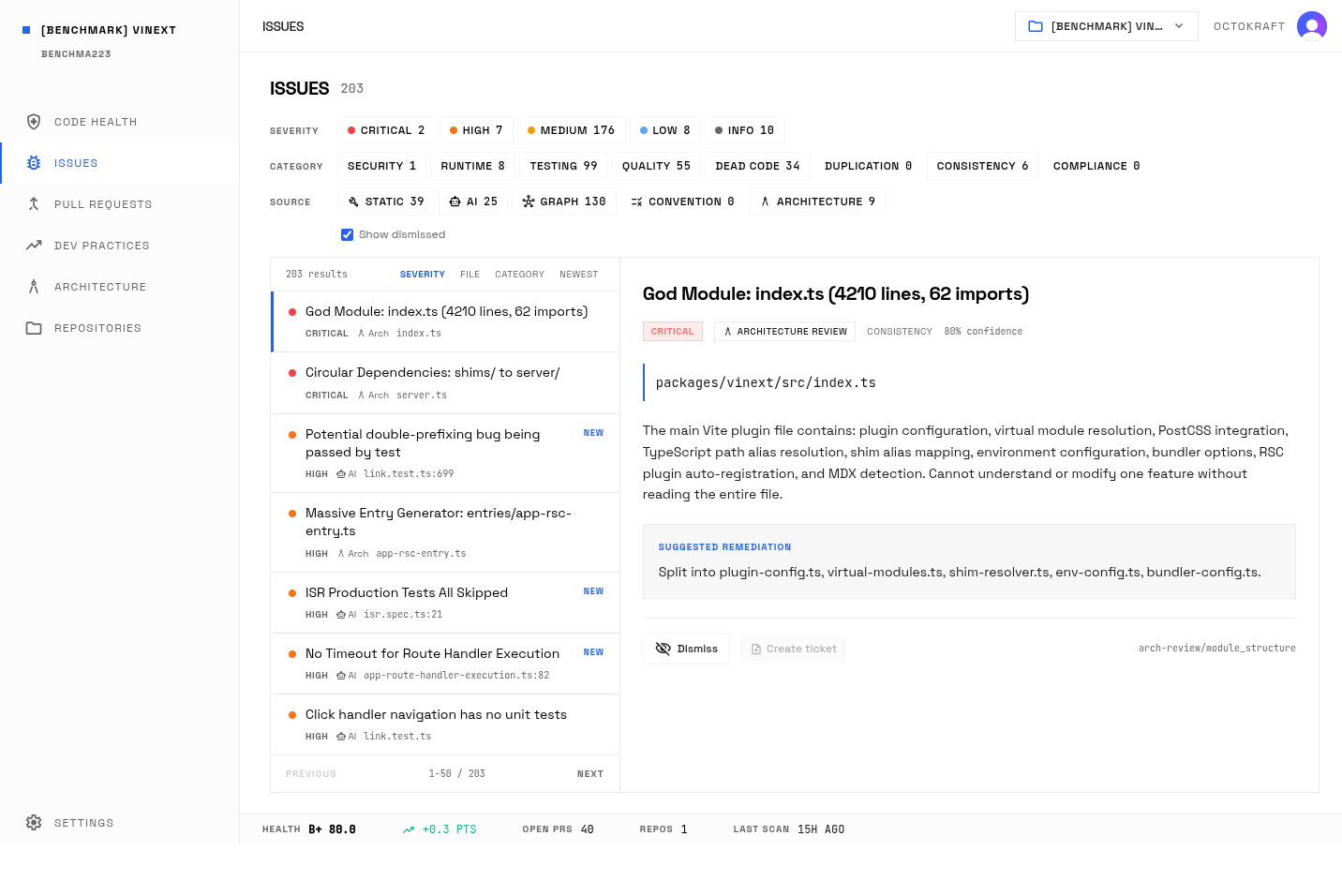

Vinext's two critical findings: the 4,210-line index.ts god module and circular dependencies between shims and server modules. The circular dependency chain means that modifying a shim module can break server behavior and vice versa, with no safe refactoring path that doesn't touch both sides simultaneously. Vinext carries 0.45 code smells per 1K LOC, higher than Next.js's 0.39.

Next.js has its own god module: app-render.tsx at 7,502 lines. It grew over years. Vinext's god modules arrived in days. The structural impact is the same: single points of failure that are difficult to test, review, or refactor in isolation.

Vinext's issue list: 203 total findings. The 4,210-line god module and circular dependencies between shims and server modules are the two critical code smell items.

Consistency: 94.7 vs 78.9

Convention adherence is 97.3% for Next.js and 93.9% for Vinext. The AI follows naming patterns and async/await conventions with high fidelity, but graph indexing detected 14 deviations in Vinext.

The consistency score measures architectural alignment, not surface-level style. The 15.8-point gap reflects real architectural inconsistencies: a god module with 62 imports acting as a single point of failure, entry generators at 2,390 lines, and shim files that contain implementation logic rather than thin wrappers.

Next.js has 3 consistency issues: the app-render.tsx god module (critical), large configuration files (medium), and error class inconsistency across packages (medium). Vinext has 6.

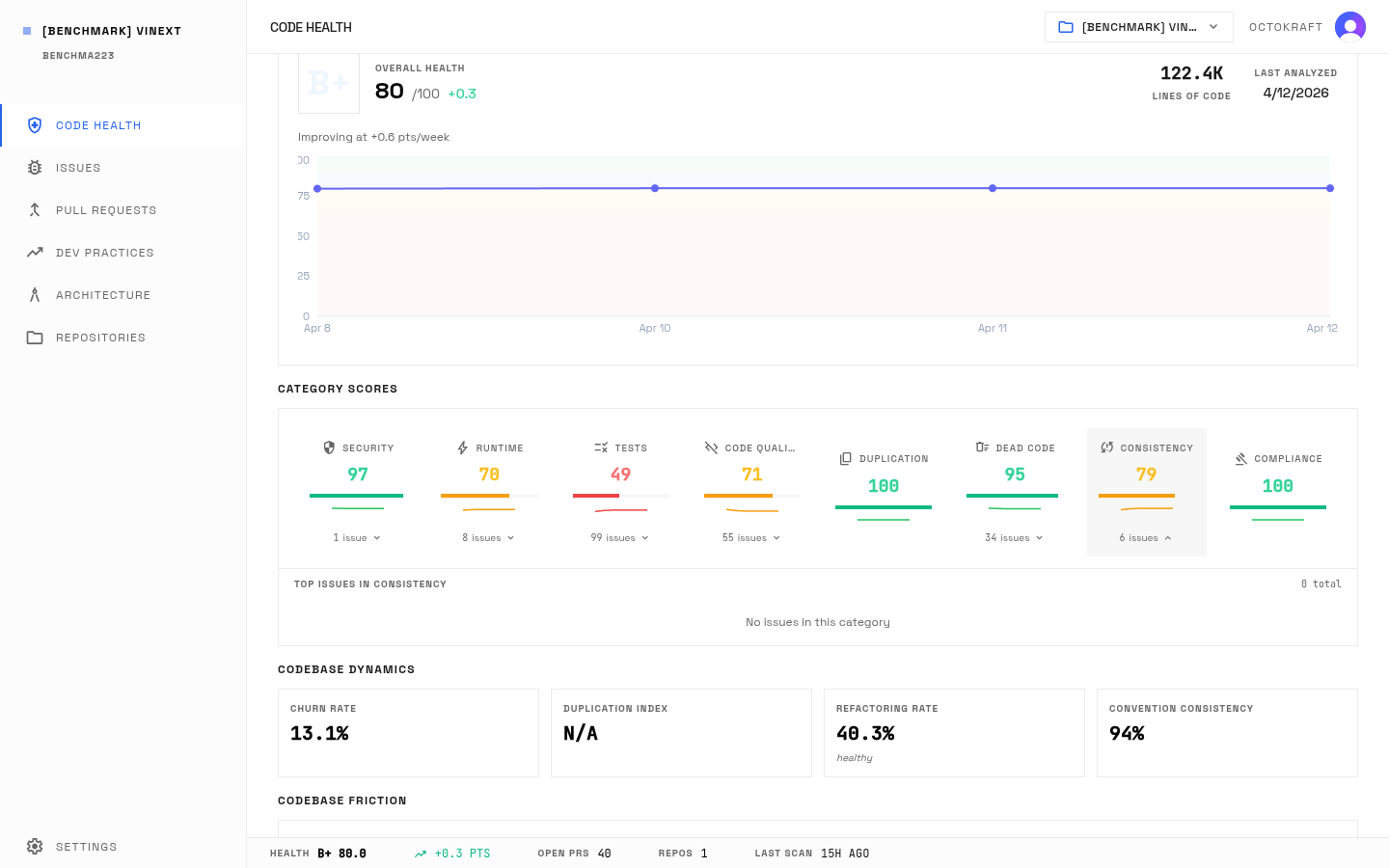

Vinext's consistency panel: 93.9% convention adherence but 78.9 consistency score. The gap comes from architectural misalignment, not naming or formatting.

Vinext's detected conventions: the AI follows naming patterns and async/await conventions with high fidelity. 93.9% adherence across 14 detected conventions.

Dead Code: 98.2 vs 94.7

Next.js has 344 dead code issues, concentrated in compiled @babel/runtime helpers and unreferenced functions in compiled dependencies. Vinext has 34, spread across unreferenced variables and functions. A fresh codebase generated in a week accumulates less dead code than a 10-year-old framework carrying compiled dependencies, but not zero.

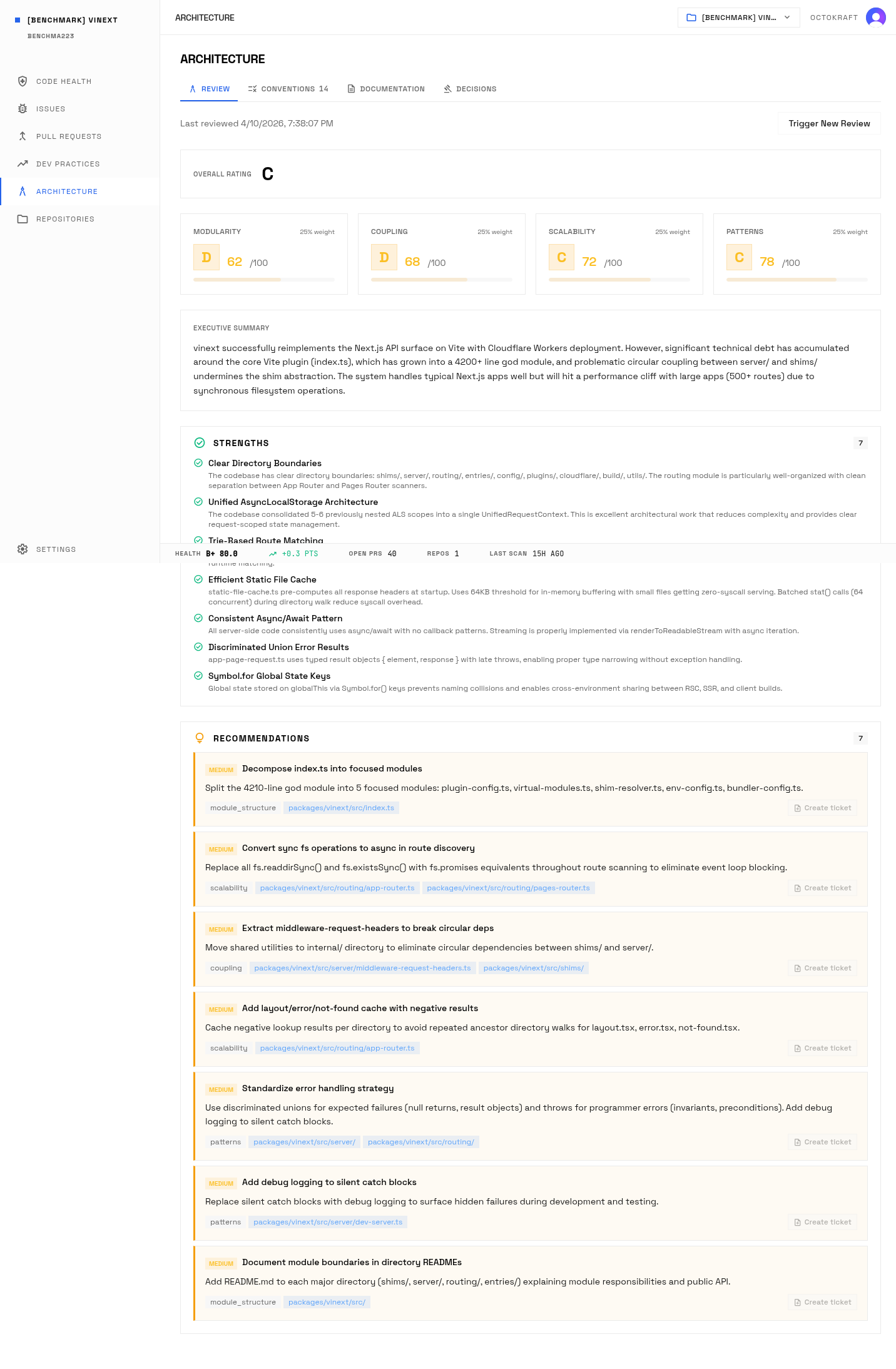

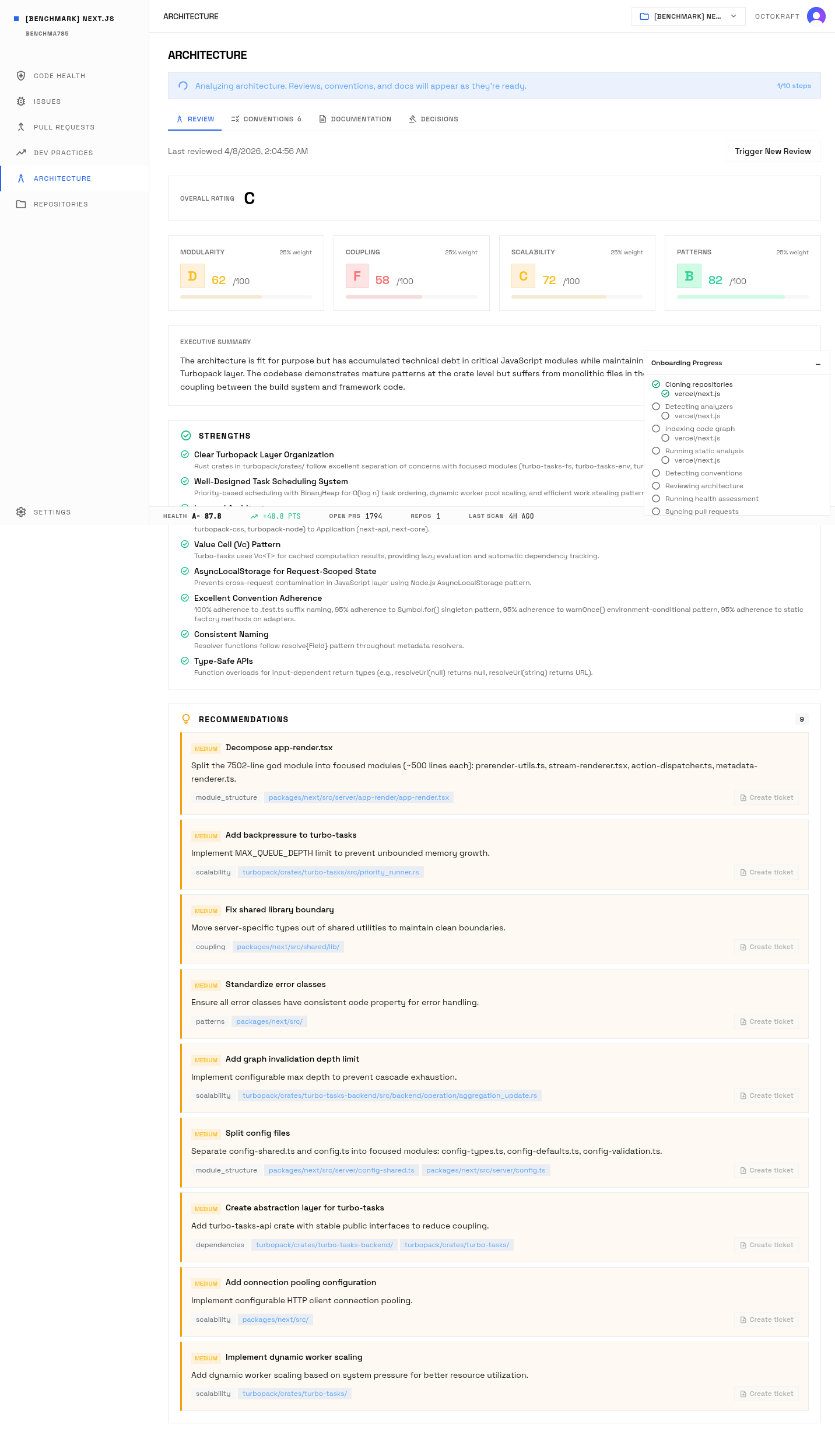

Architecture: C vs C

Both projects receive an identical C rating on architecture, but the sub-scores shifted.

| Dimension | Next.js | Vinext |

|---|---|---|

| Modularity | 62 | 62 |

| Coupling | 58 | 68 |

| Patterns | 82 | 78 |

| Scalability | 72 | 72 |

Vinext's coupling score leads by 10 points. The architecture review found that its interface abstractions (pluggable CacheHandler, streaming-first rendering) represent good coupling practices even though the implementation has circular dependency problems at the shim layer.

Next.js's tight coupling between turbo-tasks-backend and turbo-tasks, and shared library boundary violations where server-specific types leak into shared utilities, pull its coupling score down.

Heritage debt vs velocity debt. Both score C.

Vinext architecture review: C grade. Good coupling (68) from pluggable CacheHandler and streaming abstractions, but circular dependencies at the shim layer hold modularity at 62.

Next.js architecture review: C grade. Higher patterns score (82) from years of established conventions, but tight coupling between turbo-tasks packages pulls coupling to 58.

Critical Density

Raw issue counts are misleading when the codebases differ by 15x. Next.js has 2,065 total issues. Vinext has 203. But filter to critical and high-severity issues, then normalize by codebase size.

| Metric | Next.js | Vinext |

|---|---|---|

| Total Issues | 2,065 | 203 |

| Critical Issues | 2 | 2 |

| High Issues | 8 | 7 |

| Critical+High Issues | 10 | 9 |

| LOC | 1,832,916 | 122,393 |

| Critical+High per 100K LOC | 0.55 | 7.35 |

Vinext has 7.35 critical and high-severity issues per 100K lines of code. Next.js has 0.55. That is a 13.4x difference. Vinext's severe issues are concentrated in a codebase one-fifteenth the size.

The Churn Signal

| Metric | Next.js | Vinext |

|---|---|---|

| Churn Rate | 2.33% | 13.1% |

| Refactoring Rate | 9.0% | 40.0% |

| Refactoring Interpretation | Healthy | Healthy |

| Lines Added (30d) | 8,787 | 11,727 |

| Lines Deleted (30d) | 2,549 | 4,694 |

Vinext's churn rate is nearly 6x higher than Next.js's. Its refactoring rate is 40%, classified as "healthy" by the analysis pipeline, compared to Next.js's 9%.

The codebase is stabilizing. The initial AI sprint generated the code. The weeks following have been dedicated to reworking, patching, and stabilizing it. Both churn and refactoring rates are moving toward equilibrium.

What Static Analysis Sees and What It Misses

Octokraft's static analysis gave Vinext a 96.9 on security. External researchers found 31+ vulnerabilities. Both of those statements are accurate, and they are not contradictory.

Static analysis examines code patterns: injection surfaces, missing headers, path traversal, hardcoded secrets, unsafe functions. Vinext's code does not contain those patterns. The AI was disciplined about not introducing known-bad security constructs. It did not use eval(), did not hardcode API keys, did not leave SQL injection surfaces. The patterns the security scanner checks for are absent.

The 31+ externally discovered vulnerabilities live in a different layer. Hacktron's four critical findings:

- Race condition in

AsyncLocalStorage: Cross-request state pollution via unsafeenterWith()and fallback to shared state. One user's authentication tokens become readable by another user's request. - Cache poisoning via missing auth headers: Authorization and Cookie headers omitted from fetch cache keys. The first requester's response is served to all subsequent users, regardless of their authentication state.

- Middleware bypass via double decoding: Double-encoded payloads (e.g.,

/%2561dmin) bypass middleware checks while reaching protected routes normally. - API route middleware exclusion: API routes silently excluded from middleware by default. Global auth middleware protects page routes but leaves

/api/*endpoints unprotected.

None of these are single-line code defects. They are interaction bugs: the middleware module does not know the routing module silently excludes API routes. The cache module does not know the auth module expects Authorization headers in cache keys. The AsyncLocalStorage wrapper does not know the fallback path creates shared state across requests. Each component, examined individually, looks correct. The vulnerability exists in the space between them.

Hacktron's write-up puts it directly: "Vulnerabilities do not live there. They live in the negative space, and in complex interactions between layers, the stuff nobody wrote a test for."

What static analysis and structural graph analysis do catch: the consistency gaps (78.9 vs 94.7), the architectural misalignment (circular dependencies, god modules, duplicate logic across dev and prod servers), and the critical density (7.35 critical+high per 100K LOC vs 0.55). These are not the vulnerabilities themselves. They are the structural conditions where runtime vulnerabilities are more likely to live. A codebase with 6 consistency issues, 2 critical findings, and circular dependencies between its shim and server layers has more interaction surfaces where undiscovered bugs can hide.

The testing analysis caught the specific gaps: middleware execution order not tested, RSC/SSR environment isolation not tested, error boundary resolution order not tested, ISR production tests all skipped. Those gaps map to the exact locations where the vulnerabilities were found. The analysis identified where the tests were missing. It did not and could not identify the specific runtime behavior those missing tests would have caught.

A high static security score means the code does not contain known-bad patterns. It does not mean the code is secure. Runtime security properties, such as request isolation, cache key construction, and middleware execution order, require dynamic analysis, adversarial testing, or focused human review. This analysis pipeline measures the first. The external audits measured the second. Both produced accurate results about different things.

Methodology

Both projects ran through the same four-stage Octokraft pipeline as the 24-project benchmark: language-appropriate static analysis, tree-sitter knowledge graph extraction, LLM-powered behavioral analysis, and automated convention detection. Same rubric, same severity multipliers, same category weights. The full methodology, including scoring math, category definitions, and pipeline stages, is documented in the benchmark post.

Both projects used TypeScript-ecosystem static analyzers. The security scoring reflects patterns visible in the code graph. It does not include dynamic analysis, penetration testing, or runtime behavioral testing. External vulnerability findings (Vercel's 7, Hacktron's 24 validated) were not factored into the Octokraft scores and are presented separately.

Data from Octokraft's analysis pipeline, April 2026. External vulnerability data from Hacktron's independent audit and Vercel's public disclosure.